Publications

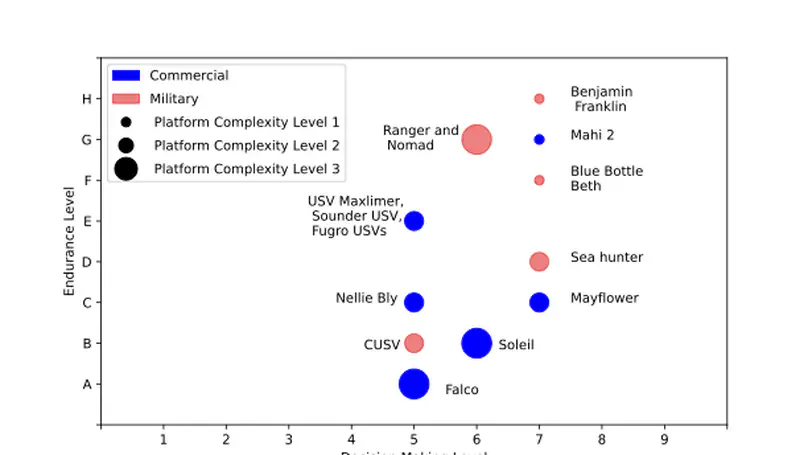

The desire to operate crewless platforms for months autonomously requires platforms that can sense their current state, maintain themselves, and perform long-term mission planning tasks to optimize their effectiveness as they degrade. How these long-term tasks are currently organized, exe- cuted, and regulated on human-crewed vessels is unexplored compared to more immediate navigation and hazard avoidance. This paper presents a series of inter-related explorations of the issues of longer- term mission planning in a fully autonomous framework. Based on the current state-of-the-art, a new three-component ranking scale for crewless platforms is proposed. Semi-structured interviews with retired military crews and commercial mariners were used to identify what planning tasks crews were currently carrying out. At the end of the interview process, themes from all interviews were reviewed, and an affinity diagram was created from the themes. The interviews revealed a surprising diversity in approaches, especially for tasks beyond machinery health assessment. Complementing this bottom-up analysis, a top-down analysis via a modified STAMP/STPA framework identifies critical information paths and control structures surrounding these tasks. By integrating the results of these two comple- mentary analyses, gaps in our current ability to achieve long-term autonomous operations are identified. Three proposed demonstration cases are developed to help develop approaches to fill these gaps.

The design of many real-life engineering systems involves optimization according to multiple, often conflicting, objectives. In this paper, an algorithm called spreading multi-objective particle swarm optimizer (SMOPSO) is developed and tested for optimization problems with two objectives. The motivation for SMOPSO is to promote a high diversity of solutions found in two-objective particle swarm optimization. This is attempted through the use of a spreading function based on neighboring particle positions and an archive controller which discriminates based on particle spacing. The spreading function directs non-dominated particles away from their nearest neighbor, aiming for evenly-spaced solutions as particles “spread out”. To test if such an approach can indeed improve Pareto front diversity, a performance comparison of SMOPSO is made to two benchmark algorithms. Preliminary results suggest the proposed algorithm may improve the diversity of solutions for a limited selection of optimization problems, but at the expense of other important measures of performance which is discussed in this paper. SMOPSO’s performance degrades for more difficult optimization problems, such those with multiple fronts and narrow global minima. An example application of SMOPSO to a theoretical, two-objective high-speed planing craft design problem is also given.